Tomorrow’s Forecast: Cloudy With A 90% Chance Of Containers

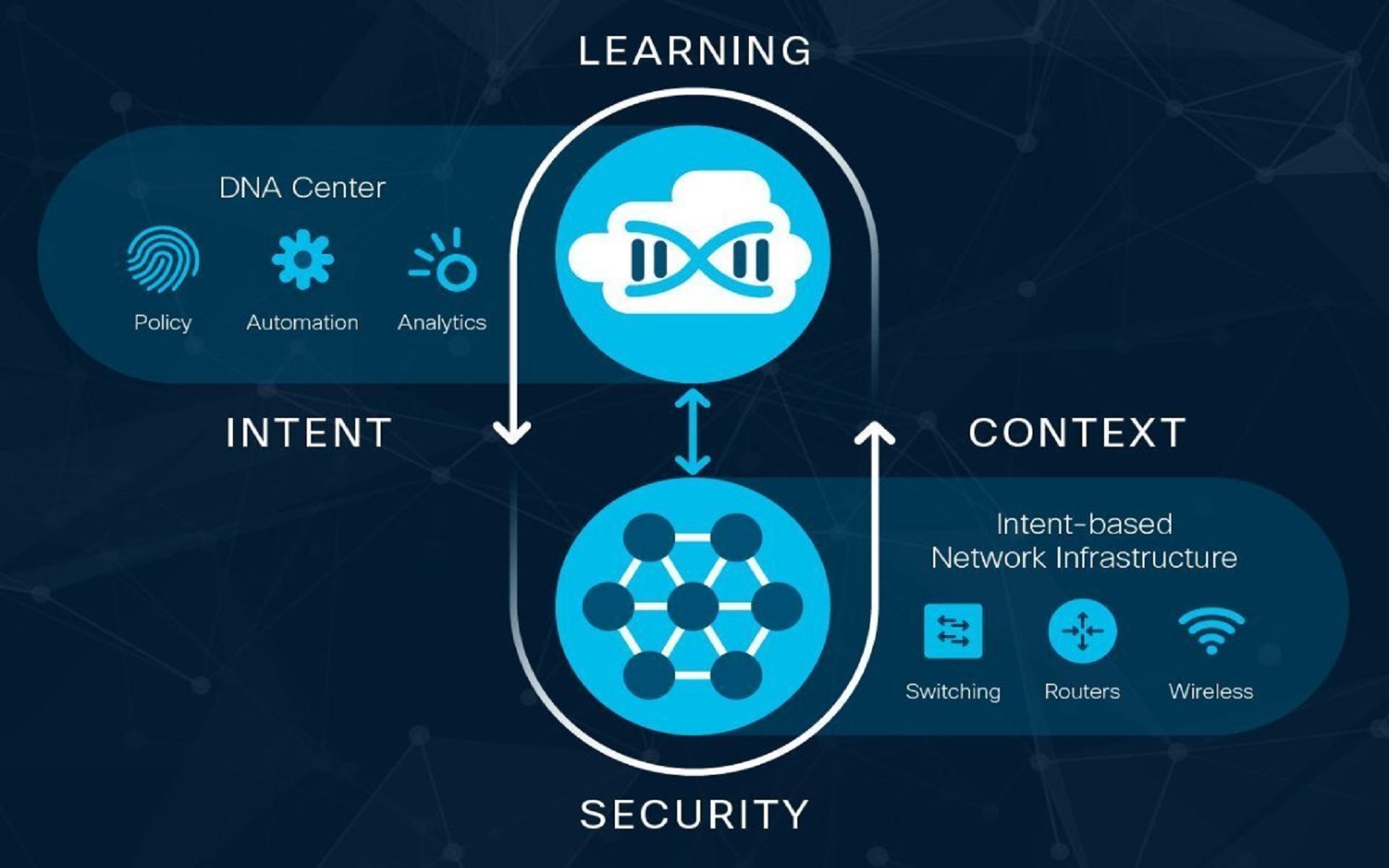

Remember the old days—back in late 2018—when your biggest question was whether a particular workload should live in the public cloud or in your data center? Today, the industry is moving quickly toward containerized, cloud native applications running in hybrid and multi-cloud environments. The same app is often distributed across multiple locations. The challenge of managing modern distributed apps across multiple clouds is real and made more challenging today by the use of Kubernetes services across private cloud and public cloud providers.

Data centers continue to be critical for organizations’ IT operations, and organizations are looking for their data centers to operate similarly to public cloud experiences. The challenge is simplifying the process of managing one’s entire IT estate—across public and private clouds—filled with different management interfaces and traditional and modern applications shared across multiple clouds. The best solution to this challenge is a consistent hybrid cloud approach, and advancements announced today by VMware and Dell Technologies are making today’s hybrid cloud even better.

VMware unveils the future of modern applications in a hybrid cloud world

Modern apps are essential to the future of every business. They are at the core of digital transformation. It’s these software investments that will define the future of all customer interactions, drive the exponential growth in revenue required to drive global markets, and reshape how we leverage data for untapped insights. Being able to deliver these applications at speed is a foundational capability for organizations looking to build and maintain competitive differentiation.

To address this growing need, VMware today has announced details of two groundbreaking new offers—VMware Tanzu, a portfolio of products enabling customers to build, run and manage modern apps in a multi-cloud environment; and VMware Cloud Foundation 4, which includes the new VMware vSphere 7 release that has been rearchitected to run Kubernetes and virtualized apps side by side at scale. Dell Technologies and VMware are “all in” to offer the industry’s best solutions to support our customers as they modernize both their apps and their businesses.

We can’t say that last bit too loud or too often. When our customers say they are going cloud native, they often have hundreds or thousands of apps that need to be replatformed. This can be incredibly disruptive to innovation and dangerous to the stability of their operation. With VMware’s announcement, supported in tight partnership with Dell Technologies infrastructure and services, you can modernize your applications at your own pace and with significantly lower risk.

Dell Technologies helps you power modern applications on any cloud with the industry’s broadest VMware-integrated portfolio

Organizations today are supporting both traditional and cloud native apps but struggle to do so effectively together and across their IT estate—private clouds, public clouds and edge locations. According to a recent Enterprise Strategy Group report, 78 percent of senior technology decision-makers at midsize and large companies say they think cloud management consistency would boost efficiency, but only five percent reported having it. This is precisely where Dell Technologies is best suited to assist.

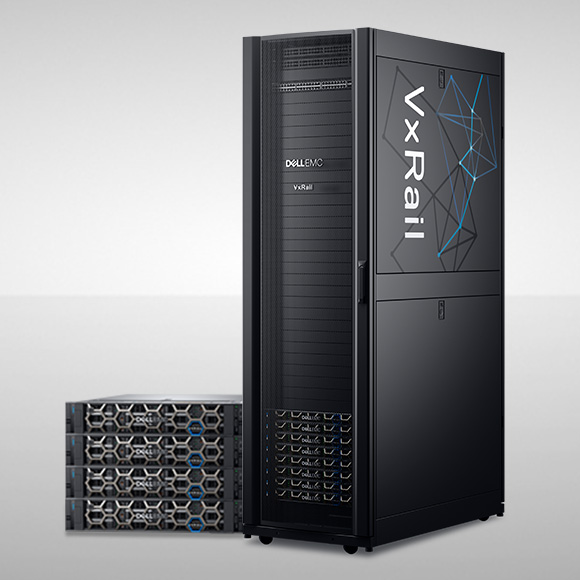

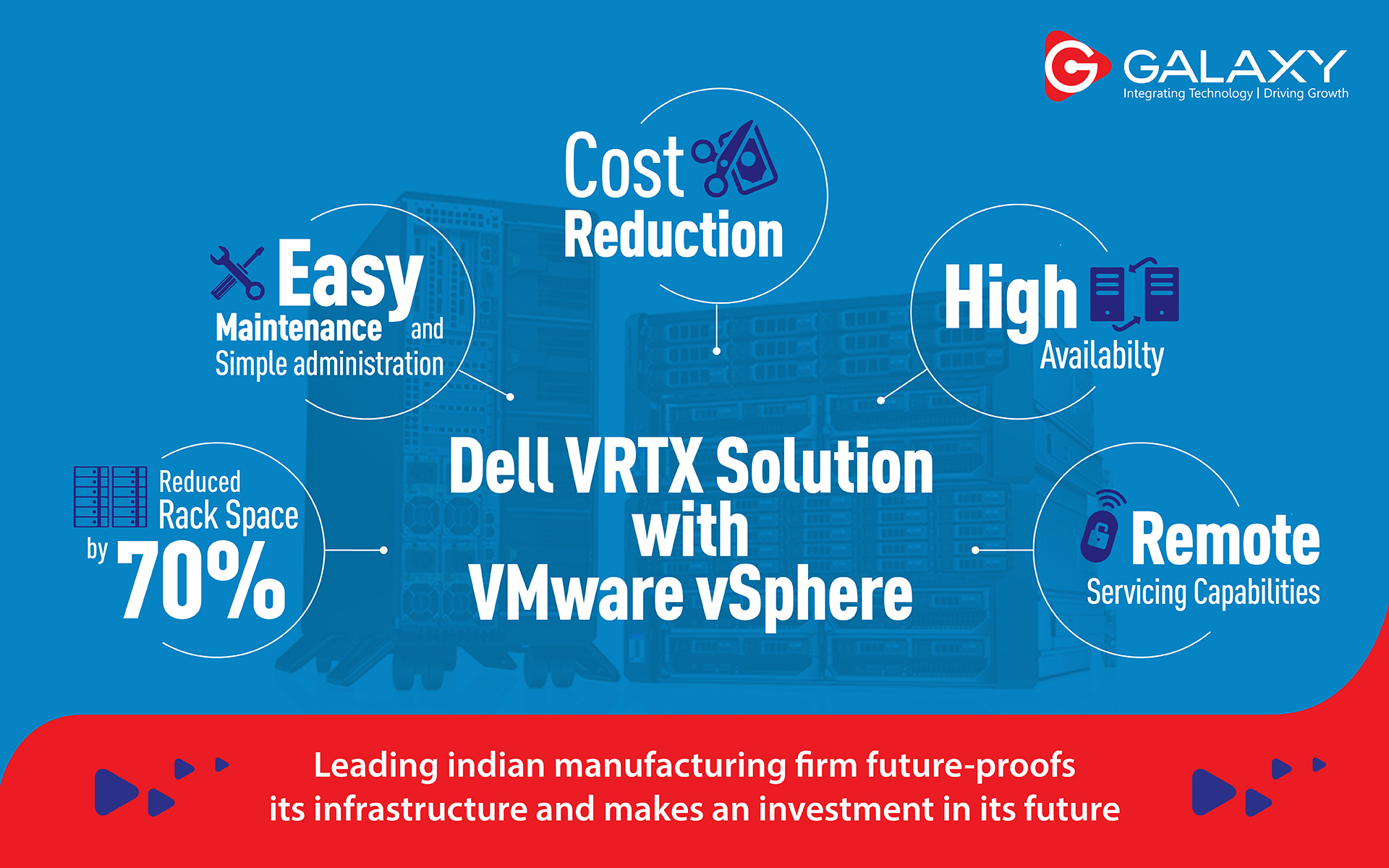

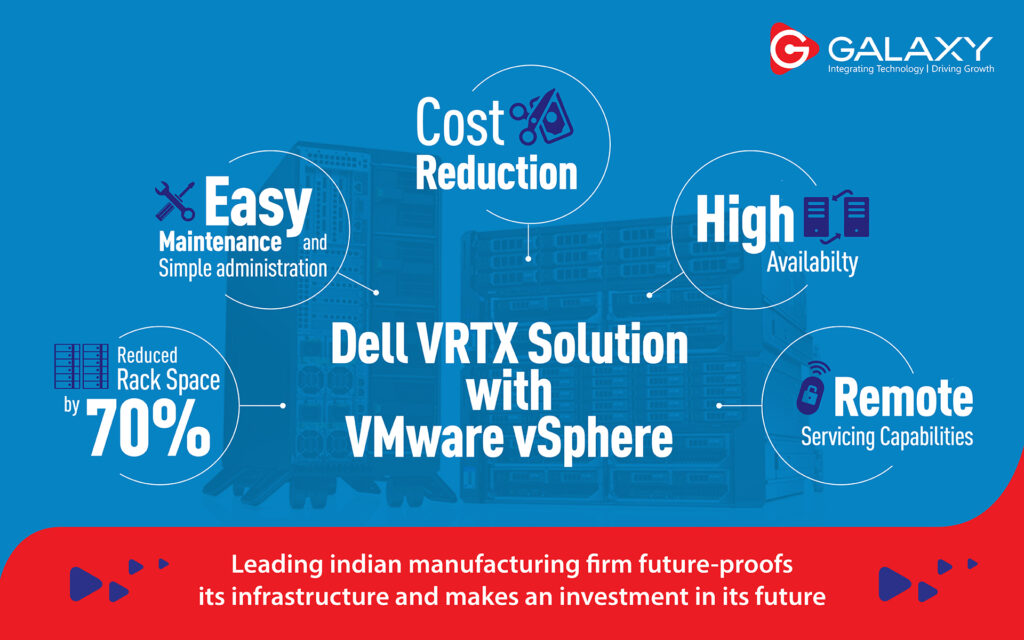

Dell Technologies Cloud Platform (VMware Cloud Foundation on VxRail), delivers a simple and direct path to modern applications. To accelerate your move to containers and a hybrid cloud operating model, Dell Technologies offers unique integration between VMware Cloud Foundation (VCF) and VxRail that supports simultaneous VM and container-based workloads on industry-leading Dell EMC PowerEdge servers and Dell EMC Storage across multiple cloud environments.

Dell Technologies Cloud Platform also delivers the fastest path to hybrid cloud. Dell Technologies Cloud Platform with VxRail—the only jointly engineered HCI system with deep VMware Cloud Foundation integration—now delivers Kubernetes at cloud scale and at cloud speed. With our synchronous release commitment for VxRail, customers can run Kubernetes on Dell Technologies Cloud Platform with vSphere 7.0 within 30 days of VMware general availability. Customers also can choose Dell Technologies Cloud Validated Designs with same-day general availability for PowerEdge servers. Through both options, Dell Technologies ensures that IT is able to empower developers with rapid access to the latest technologies for modern applications.

Additionally, with Dell Technologies on Demand, we offer flexible consumption-based pricing and as-a-Service managed cloud experience for your on-premises data center. This also includes our ProDeploy and managed services to make implementation seamless and ProSupport services, with more than 1,900 global VMware certifications, to help ensure high availability and optimal performance.

Data drives your modern applications in the hybrid cloud

Alongside containers, data plays a central role in this new multi-cloud world. Imagine the horror of building out a new cloud native app and deploying it seamlessly across multiple clouds…only to have everything fail because the data required to support the application isn’t available in all the necessary locations. You can either update your resume, or you can learn about the awesome data management features that are built into Dell Technologies’ hardware stack. Check out our Dell Technologies Cloud Validated Designs, which allow you to consume Dell EMC Unity XT and Dell EMC PowerMax storage as part of the Dell Technologies Cloud. These storage platforms are integrated with VMware Cloud Foundation, vSphere with Kubernetes, and the VMware automation and orchestration tools. Dell Technologies is also the first vendor to qualify external NFS and Fibre Channel (FC) Storage solutions for VMware Cloud Foundation workload domains.

We’ve got you covered with flexible and consistent data management features, replication between environments, intrinsic security across the VM/container and hardware stack, and the Dell EMC PowerProtect Data Manager for Kubernetes to protect both your traditional workloads and your modern applications.

When you need to operate a dynamic, secure environment with assured access to your data, when and where it’s needed, there’s no better partner than Dell Technologies. We’ll help you ensure that your modern apps continue to run across the multicloud without interruption.

Source: https://blog.dellemc.com/en-us/tomorrows-forecast-cloudy-with-90-chance-of-containers/

FOR A FREE CONSULTATION, PLEASE CONTACT US.