About the Customer

ideaForge is the pioneer and the pre-eminent market leader in the Indian unmanned aircraft systems (UAS) market. ideaForge had the largest operational deployment of indigenous UAVs across India, with an ideaForge manufactured drone taking off every five minutes for surveillance and mapping on an average. ideaForge customers have completed over 5,00,000 flights using UAVs. ideaForge ranked 5th globally in the dual-use category (civil and defense) drone manufacturers as per the report published by Drone Industry Insights in December 2023.

Challenge

ideaForge encountered a critical challenge in ensuring secure and efficient connectivity between their on-premises location or Data Center to AWS cloud infrastructure.

The primary concerns included:

- Establishing dynamic and robust routing for seamless data transfer.

- Ensuring high availability and redundancy.

- Maintaining low latency and high performance for critical application access.

- Simplifying network management and operational overhead.

These challenges were pivotal as they directly influenced ideaForge’s operational efficiency, data security, and ability to deliver timely UAV data to their clients.

The ideaForge wanted to protect network traffic both inside AWS and between their on-premises location and AWS resources.

As part of achieving the goal, ideaForge began by transforming its infrastructure from a fleet of servers and systems which are on-premises to a hybrid architecture that leverages cloud-based infrastructure as a service.

Our Solution

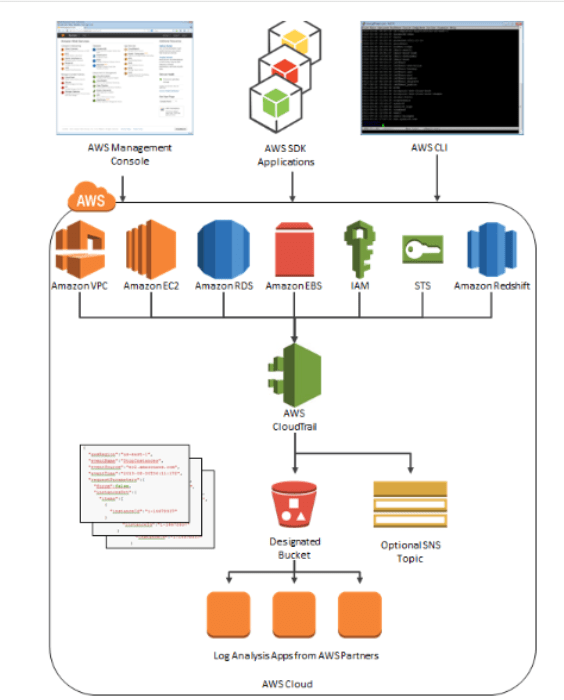

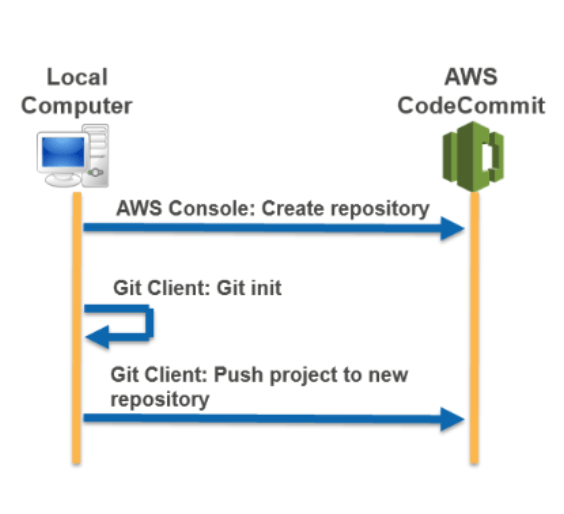

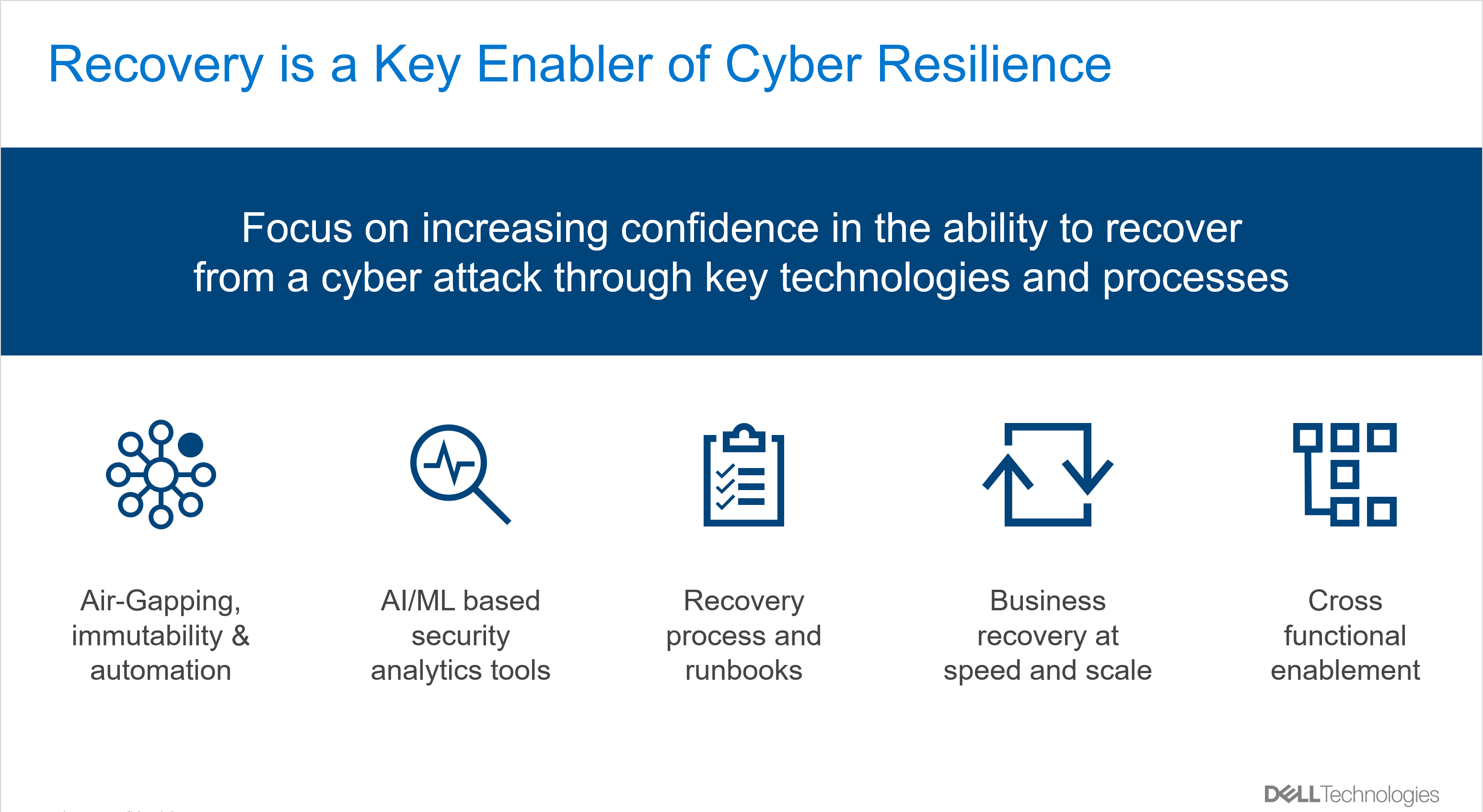

Galaxy, as a trusted Cloud IT partner, provided a robust solution by implementing an AWS Site-to-Site VPN with BGP (Border Gateway Protocol) routing. This solution facilitated secure, scalable, and dynamic connectivity between ideaForge’s on-premises location or Data Center to AWS infrastructure.

Key components of the solution included:

Establishing a secure IPSec VPN connection and using BGP for dynamic routing between ideaForge’s on-premises network and AWS VPC (Virtual Private Cloud).

Configuring redundant VPN tunnels with BGP sessions to ensure failover capabilities and continuous uptime.

Utilizing AWS VPN to enhance bandwidth and minimize latency where necessary.

Leveraging AWS’s management tools to monitor and manage BGP routing and VPN connections, ensuring minimal downtime and efficient network operations.

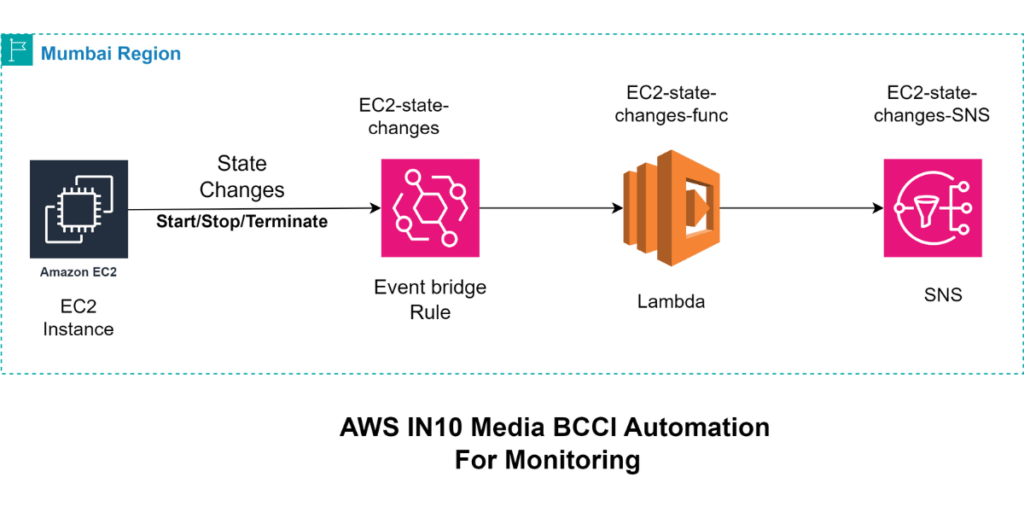

AWS Site-to-Site VPN gives visibility into local and remote network health and monitors the reliability and performance of VPN connections by integrating with Amazon CloudWatch.

It provides tunnel customization options such as inside tunnel IP address, pre-shared key, and Border Gateway Protocol Autonomous System Number (BGP ASN).

It supports NAT Traversal applications, allowing you to use private IP addresses behind routers on private networks with a single public IP address facing the internet.

Results and Benefits

The implementation of the AWS Site-to-Site VPN with BGP routing resulted in several significant benefits for ideaForge:

The encrypted VPN connection ensured that all data transfers between on-premises and AWS Infrastructure were secure.

BGP routing enabled automatic route updates and failover, ensuring continuous and reliable connectivity even during network changes or disruptions.

Optimized network performance leading to faster data transfers and significantly improved application performance.

Implemented redundancy to avoid single points of failure, with multiple VPN connections and careful failover configurations using BGP dynamic routing.

The high availability configuration with redundant VPN tunnels and BGP sessions ensured continuous network uptime which is 99% and reliability.

Simplified network management with a centralized and automated routing solution, reducing administrative overhead by 50%, allowing them to focus on core business activities.

- Scenario:

Before Implementation: Administrators perform network checks and updates twice a week, taking 2 hours each session.

Total time per week: 2 sessions * 2 hours = 4 hours

- After Implementation:

With the new solution, administrators only need to perform these tasks once a week.

Total time per week: 1 session * 2 hours = 2 hours

- Calculation:

Weekly Time Savings: 4 hours – 2 hours = 2 hours saved per week

Annual Time Savings: 2 hours/week * 52 weeks = 104 hours saved per year

The solution provided a scalable framework to accommodate ideaForge’s growing network and data transfer needs as their operations expanded.

About Galaxy Office Automation Private Limited

With 36 years of experience in driving digital transformation, Galaxy Office Automation Pvt. Ltd is a trusted technology solutions provider that delivers innovative, cutting-edge solutions integrating advanced technologies. Our team of over 245 professionals is committed to continuous improvement, holding a range of esteemed AWS certifications that demonstrate our expertise in cloud architecture, DevOps, and storage solutions. We have further solidified our expertise by achieving the AWS Storage Competency, enabling us to provide tailored solutions for our valued clients in multi-cloud environments. By constantly upgrading our portfolio of solutions and skills, we stay ahead of the curve in the fast-changing digital world, ensuring our clients receive the best possible support.

The implementation of the AWS Site-to-Site VPN with BGP routing has been a game-changer for ideaForge. By addressing their security, reliability, performance, and scalability challenges, Galaxy successfully provided a solution that significantly enhanced ideaForge’s network infrastructure. The result was a 50% reduction in administrative overhead. These improvements have enabled ideaForge to achieve faster data transfers, improved application performance, and seamless scalability, positioning them for continued growth and success in their industry.